By: Carl Austins

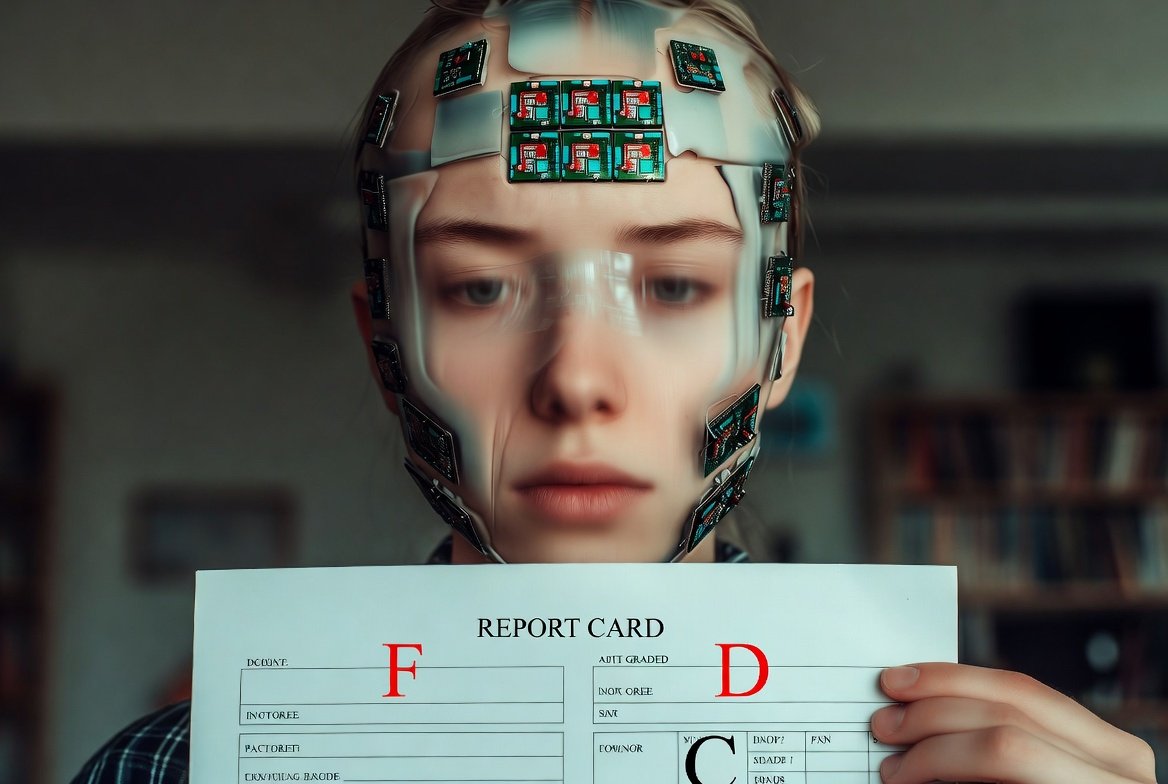

As a concerned graduate student studying the intersection of technology and society, I’ve become deeply troubled by the rapid expansion of artificial intelligence and the consequences it may have on human understanding. While AI offers unprecedented access to information, it may inadvertently dilute the quality of knowledge, distort truth, and increase the spread of misinformation.

As I move deeper into graduate-level research, I can’t shake an unsettling thought: AI is simultaneously giving us more information than humanity has ever possessed and eroding the very foundations of how we understand information. The contradiction feels almost poetic—like watching a dam burst while we’re still standing on the riverbank, admiring the speed of the water.

We are living through a moment that future historians might describe as the Inflection Point of Knowledge: the era where information became infinite, effortless, and dangerously unfiltered.

The Disappearance of Productive Struggle

One of the most consistent themes across educational psychology is the concept of “desirable difficulty,” coined by Robert Bjork. The idea is simple: we learn more deeply when we struggle constructively. Graduate school embodies this principle—hours spent reading, analyzing, cross-checking sources, discussing contradictory viewpoints.

AI eradicates that difficulty.

The friction that once forced us to think—slowly and painfully—is disappearing. And without friction, the neural pathways that support deep understanding weaken. This isn’t theoretical; researchers have observed similar cognitive shortcuts in the rise of calculators and GPS systems. Few people under 40 can read a map confidently anymore.

What happens when people stop reading deeply?

What happens when shortcut thinking becomes the norm?

History Has Seen This Before

Whenever a revolutionary technology expands access to information, society celebrates—but also suffers consequences.

The Printing Press (15th century)

The printing press democratized knowledge, but it also led to the explosion of pamphlets spreading misinformation, political propaganda, and unverified claims. Martin Luther used it to challenge the Catholic Church; countless others used it to distribute pseudoscience and fear.

The irony is historic:

A tool built to spread knowledge also accelerated misinformation.

The Radio Era (early 20th century)

Radio brought mass communication into homes, but it also amplified voices like Father Coughlin—who spread conspiracy theories to millions. Historians note that radio’s influence on public opinion outpaced society’s ability to vet content.

The Internet (late 20th century)

The early internet was a miracle—unprecedented access to global knowledge. But it quickly became a breeding ground for misinformation, echo chambers, and mass content duplication. Studies show that false news spreads faster on Twitter than factual news because of its emotional impact and shareability.

AI is repeating the pattern—only exponentially faster.

The Feedback Loop of Diluted Information

AI systems learn by consuming vast amounts of text, images, and data from the web. But here’s the problem:

- If AI-generated content floods the internet

- Then future AI models scrape that content as training data

- And over time, models become more detached from factual, human-verified ideas

This isn’t hypothetical. AI researchers have warned of what they call Model Collapse, where models trained on AI-generated content degrade in quality, coherence, and factuality. It’s like making photocopies of photocopies—the quality deteriorates with every generation.

The more polluted the information landscape becomes, the harder it is for AI to distinguish truth from noise. And if AI can’t distinguish it, neither can the people relying on AI for answers.

The Risk of Cognitive Complacency

Convenience is seductive.

When a machine gives us instant explanations, we forget how to question them. This isn’t new—Socrates expressed concern that the invention of writing would weaken human memory, arguing that people would no longer practice remembering. Medieval scholars worried the printing press would cheapen intellectual authority.

Their fears weren’t entirely misplaced.

Each innovation changed how society thought—sometimes for the worse.

The concern today is not just that AI gives us answers, but that it gives us confident answers. And confidence, even when misplaced, has a psychological effect on the reader. People tend to trust coherent explanations—even if they are factually wrong.

The Human Signal Is Fading in the Noise

If knowledge is power, then diluted knowledge is dangerous.

We are moving toward a world where genuine expertise—built through discipline, specialization, and years of intellectual struggle—is drowned out by AI-generated content that appears equally polished. This is not just an academic concern; it’s a societal one.

- How does a high school student know which explanation of a scientific concept is correct when AI generates countless variations?

- How does a researcher verify sources when citations themselves may be AI-fabricated?

- How does an AI model differentiate between real peer-reviewed research and synthetic content designed to imitate it?

The human voice risks becoming just another signal in a sea of algorithms.

AI Needs Us More Than We Realize

AI is not a self-sustaining intelligence. It is a reflection of us—our data, our knowledge, our culture, our failures. Without humans maintaining high standards of truth, rigor, and verification, AI becomes directionless.

We are the custodians of the informational ecosystem.

If we allow it to fill with unverified, synthetic content, AI will lose its ability to help us. It will lose the anchor that keeps it tethered to reality.

A Call to Preserve the Integrity of Knowledge

I don’t think AI is inherently harmful. I think it is powerful—and power without responsibility is always risky.

What makes knowledge meaningful isn’t its accessibility; it’s our relationship with it. Knowledge requires interpretation, context, skepticism, humility, and effort. These are human qualities that no machine can replicate.

If we surrender those qualities, we risk becoming passive consumers of information rather than active participants in understanding.

The future of knowledge depends on whether we choose to remain intellectually engaged or outsource thinking entirely.

Because if we lose the ability to filter truth from noise, we lose the essence of what it means to understand.

Leave a Reply